Information Retrieval Models - Overview

This page provides a structured overview of modern information retrieval models, from traditional lexical approaches to neural and LLM-based architectures.

Note: The content on this page is primarily a condensed reformatting and adaptation of the comprehensive research paper, "A Survey of Model Architectures in Information Retrieval" by Xu et al. (2026).

1. Traditional Models

In this section, we briefly review traditional IIR models prior to neural methods, with a focus on the Boolean model, vector space model, and probabilistic model. These models, which serve as the foundation for later developments in IR, are built upon the basic unit of a “term” in their representations.

Boolean Model

▼

The Boolean model treats information retrieval as a set-membership problem. A document is either relevant or not relevant to a query, based on whether it satisfies a Boolean expression built from terms using logical operators such as AND, OR, and NOT.

Theory

Let D = (d₁, d₂, ..., dₙ) be a set of documents and T = (t₁, t₂, ..., tₘ) a vocabulary of index terms. Each document can be represented as a binary vector over T, where each component indicates whether a term is present (1) or absent (0).

For each term t, we define the set of documents in which it occurs:

S(t) = { d ∈ D | t occurs in d }

A query is a Boolean expression over terms using logical operators AND (∧), OR (∨), and NOT (¬).

The meaning of a query is defined using set operations:

S(A ∧ B) = S(A) ∩ S(B)

S(A ∨ B) = S(A) ∪ S(B)

S(¬A) = D − S(A)

The result of a query Q is the set S(Q) ⊆ D, containing all documents that satisfy the Boolean expression. Documents are not ranked—retrieval is strictly binary.

Example

Query: “information AND retrieval NOT database”

Returns only documents that contain both “information” and “retrieval”, but exclude any document containing “database”.

Vector Space Model

▼

The Vector Space Model represents documents and queries as vectors in a shared space where each dimension corresponds to a term. Instead of exact matching, it measures how “close” a document is to a query: the more similar their vectors, the more relevant the document. This allows the system to rank results by degree of relevance rather than a simple yes/no decision.

Theory

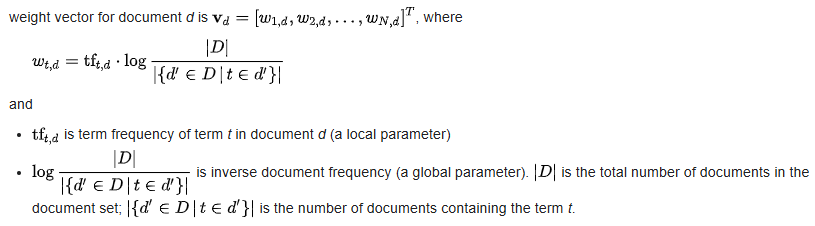

Each document is represented as a vector of term weights, often computed using TF-IDF, which combines how frequent a term is in a document with how rare it is across the collection.

Similarity between a query and a document is then computed using vector operations e.g. cosine similarity which measures the angle between the two vectors.

TF-IDF (Term Frequency-Inverse Document Frequency) is a numerical statistic that reflects how important a term is to a document in a collection or corpus. Here is the definition:

Note: Other weighting schemes exist, such as BM25, which we will discuss in the probabilistic model section.

Note: Other weighting schemes exist, such as BM25, which we will discuss in the probabilistic model section.

Example

Suppose the query is “machine learning” and a document contains both terms frequently. Its vector will align closely with the query vector, resulting in a high similarity score. Another document containing only “machine” will still be retrieved, but ranked lower due to weaker overlap.

Advantages

Unlike Boolean models, it supports ranking of results and partial matching, meaning documents can still be retrieved even if they don’t contain all query terms. This leads to more flexible and realistic search behavior.

Probabilistic Model

▼

The probabilistic model approaches Information Retrieval by asking a simple question: “What is the probability that this document is relevant to the query?” Instead of exact matches or geometric similarity, it ranks documents based on how likely they are to be useful, given the presence or absence of query terms.

Each term contributes evidence toward relevance. If a term appears frequently in relevant documents but rarely in irrelevant ones, it increases the probability that a document containing it is relevant. The model often assumes terms are independent, which simplifies computation while still producing strong results in practice.

Theory

The classic probabilistic approach is the Binary Independence Model, where each term is either present or absent in a document. Based on a set of judged documents, the system estimates how strongly each term is associated with relevance.

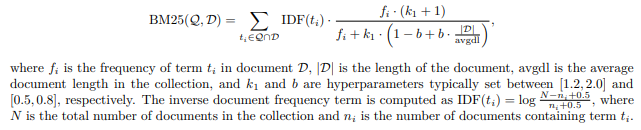

More advanced models like BM25 extend this idea by considering term frequency and document length. Terms that appear often in a document increase its relevance score, but with diminishing returns, while longer documents are normalized to avoid unfair advantage. Here is the definition of the BM25 scoring function:

Example

Suppose you search for “machine learning basics”. A document that contains all three terms multiple times—especially if those terms are rare across the collection—will receive a high score. If another document only contains “machine” once, it will be ranked much lower because the probability of relevance is weaker.

Advantages

- Provides a clear probabilistic interpretation of relevance.

- Forms the foundation of highly effective ranking functions like BM25.

- Handles term importance more flexibly than purely binary models.

- Works well in real-world search engines due to its balance of simplicity and performance.

While these models are effective, they rely on fixed, hand-crafted scoring formulas based on term statistics. Even with tuning, their structure remains limited. This motivates a shift to supervised machine learning, where models learn how to combine multiple relevance signals from labeled data—an approach known as Learning-to-Rank.

2. Learning to Rank

Traditional retrieval models rely on manually designed scoring functions, but Learning-to-Rank (LTR) takes a fundamentally different approach: it uses machine learning to automatically learn how to order documents by relevance. Instead of assigning scores based on fixed formulas, LTR trains a model on labeled data—queries paired with documents and their relevance judgments—so that it can predict rankings that closely match human preferences. The key idea is that ranking is not about predicting absolute values, but about getting the relative ordering of documents correct, especially at the top of the list where user attention is concentrated.

Pointwise Approach

The pointwise approach treats ranking as a standard regression or classification problem. Each document is considered independently, and the model learns to predict a relevance score for a given query-document pair. Once all documents are scored, they are simply sorted in descending order.

While this approach is simple and computationally efficient, it has an important limitation: it ignores the relationships between documents. Ranking is inherently a comparative task, but pointwise methods evaluate each item in isolation. As a result, a model might assign reasonable scores individually but still produce a poor overall ranking because it does not explicitly learn which documents should be ranked above others.

Pairwise Approach

The pairwise approach addresses this limitation by reframing ranking as a comparison problem. Instead of predicting absolute scores, the model learns to decide which of two documents is more relevant for a given query. Training data is constructed as pairs of documents with known preferences (e.g., document A should rank higher than document B).

This formulation directly captures the core goal of ranking—getting the order right. Many influential methods are based on this idea, where the model is penalized whenever it predicts the wrong ordering between two items. However, as highlighted in the literature, pairwise methods still operate on local comparisons and do not fully account for the structure of the entire ranked list.

Another challenge is computational: the number of possible document pairs grows quadratically with the number of documents, making training more expensive. Despite this, pairwise approaches have been widely successful and form the foundation of many practical ranking systems.

Listwise Approach

The listwise approach represents the most advanced formulation of Learning-to-Rank. Instead of focusing on individual documents or pairs, it considers the entire ranked list as a single training instance. The model is trained to produce rankings that directly optimize evaluation metrics such as NDCG, which measure the quality of the full ordering.

This idea is strongly emphasized in modern research: ranking should be treated as a prediction problem over lists, not isolated elements. Listwise methods define loss functions over permutations of documents, often using probabilistic models (e.g., permutation probability or top-k probability) to capture the likelihood of a correct ranking.

In practice, listwise approaches tend to achieve the best performance because they align training directly with how ranking quality is evaluated. However, they are also the most complex to design and optimize, as they require modeling interactions across the entire set of documents.

Why Learning-to-Rank Matters

Learning-to-Rank has become the dominant paradigm in modern search engines, recommendation systems, and large-scale information retrieval systems. Its strength lies in its ability to integrate a wide range of signals—text relevance, user behavior, personalization features—into a single learned model.

Perhaps the most important insight is the progression from pointwise → pairwise → listwise methods. This progression reflects an increasing awareness that ranking is not about individual predictions, but about optimizing the quality of the entire ordered list. As systems evolve, there is a clear trend toward methods that better capture global structure, even if they require more sophisticated modeling and computation.

Traditional Learning-to-Rank models are limited because they depend on manually engineered features. This prevents them from learning in an end-to-end way and makes it difficult for them to handle the lexical gap, where relevant documents use different wording than the query. Since their decisions are based on predefined statistical signals rather than true meaning, they struggle to capture semantics, motivating the development of neural ranking models that learn directly from raw text.

3. Neural Ranking Models

Neural ranking models were developed to overcome the limitations of traditional learning-to-rank methods by learning semantic meaning directly from raw text. This allows them to bridge the lexical gap—understanding that terms like “computer” and “PC” are related—while removing the need for manual feature engineering. These models are generally divided into two types: representation-based models, which encode documents into vectors for fast and efficient retrieval, and interaction-based models, which jointly process queries and documents for deeper, more precise matching at a higher computational cost.

Representation-based Model

▼

Representation-based models can be seen as a natural evolution of the Vector Space Model. Instead of relying on sparse term-based vectors, these models encode both queries and documents into dense vectors within a shared semantic space. Relevance is then computed using simple similarity functions like cosine similarity or dot product.

A defining characteristic of this approach is the independent encoding of queries and documents. Each is processed separately by the same (or similar) neural encoder, with no interaction between them during encoding. This makes the design of the encoder critically important—it must compress a variable-length text into a single fixed-size vector that captures its full meaning.

Core Idea

Imagine transforming every document into a single point in a high-dimensional space. Queries are mapped into the same space, and relevance becomes a geometric problem: find the closest vectors. Because documents are encoded independently, their representations can be precomputed, making retrieval extremely efficient at scale.

Example models: DSSM (Deep Structured Semantic Model), CNN-based Models (C-DSSM), Sequential Models (LSTM)

One of the earliest neural approaches was the DSSM. It uses techniques like word hashing to handle large vocabularies and feeds the resulting representations into multi-layer perceptrons (MLPs). Queries and documents are encoded separately, and their similarity is computed using cosine similarity.

Later models replaced simple feedforward networks with convolutional neural networks (CNNs). These apply 1D convolutions over sequences of word embeddings, allowing the model to capture local patterns like phrases and n-grams. A max-pooling layer then extracts the most important features to form the final vector representation.

Another improvement comes from recurrent neural networks, especially LSTMs. These process text sequentially, allowing them to capture word order and long-range dependencies. The final hidden state is often used as the overall representation of the document or query.

Hierarchical & Attention-based Models

Some approaches recognize that documents have a hierarchical structure (words → sentences → paragraphs). These models use attention mechanisms to focus on the most important parts at each level, producing richer and more informative representations.

Other Representation Approaches

Beyond DSSM variants, several methods explore how to build effective text embeddings. Doc2Vec learns fixed-length representations in an unsupervised way, while simpler techniques like averaging word embeddings can surprisingly perform well for short texts. However, these simpler methods often fail to capture word order and deeper contextual meaning.

Strengths

Representation-based models are powerful at capturing global semantic meaning. Because document vectors can be computed in advance, they are highly efficient for large-scale retrieval systems. This makes them well-suited for applications like search engines where speed is critical.

Limitations

Compressing an entire document into a single fixed-size vector inevitably leads to information loss. These models may struggle with exact term matching and fine-grained relevance signals. As a result, they can miss subtle but important details—an issue that motivates the development of interaction-based models.

Interaction-based Model

▼

Unlike representation-based models that compress queries and documents into single vectors, interaction-based models take a fundamentally different approach. They process the query and document together from the very beginning, building a detailed interaction map that captures how individual terms relate to each other.

Instead of summarizing meaning early, these models construct a fine-grained interaction representation (often called an interaction matrix), where each entry reflects the similarity between a query term and a document term. Neural networks then learn hierarchical matching patterns from this structure, ultimately producing a single relevance score for the query-document pair.

Interaction Matrix (Core Idea)

The core building block is the interaction matrix: a grid where rows represent query terms and columns represent document terms. Each cell contains a similarity signal (e.g., cosine similarity or exact match). This matrix can be thought of like an image, where patterns in the grid reveal how well the document matches the query at a very detailed level.

Example, models: MatchPyramid (CNN over Interactions), DRMM (Histogram-based Matching), K-NRM (Kernel-based Soft Matching)

One of the earliest models, MatchPyramid, treats the interaction matrix like an image and applies convolutional neural networks (CNNs). The CNN detects local matching patterns such as matching phrases or adjacent terms (bigrams), using filters and pooling layers to extract increasingly abstract features.

The Deep Relevance Matching Model (DRMM) emphasizes the importance of exact term matches. Instead of a single interaction matrix, it builds a matching histogram for each query term. These histograms group similarity scores into bins (e.g., exact match, strong match, weak match), capturing the distribution of how document terms relate to each query term. A neural network then learns how much each pattern contributes to overall relevance.

The Kernel-Based Neural Ranking Model (K-NRM) introduces a more refined way to capture similarity using kernel functions. Each kernel corresponds to a specific similarity level (such as exact or approximate matches) and produces soft match counts. This allows the model to measure not just whether terms match, but how strongly they match across different levels of similarity.

Extensions like Conv-KNRM go even further by incorporating n-gram matching, enabling the model to capture phrase-level interactions rather than just individual word matches.

Attention & Multi-Perspective Matching

More advanced models introduce attention mechanisms and multi-perspective comparisons. These approaches weigh the importance of different query terms and analyze interactions from multiple angles (e.g., different pooling strategies or filter sizes). This helps the model focus on the most important parts of the query when evaluating a document.

Positional & Structural Signals

Interaction-based models can also capture term adjacency and positional information. This means they understand not just which words match, but also whether they appear close together or in meaningful order— an important factor for detecting phrases and context.

Strengths and Trade-offs

Because they analyze fine-grained interactions, these models often achieve superior ranking performance compared to representation-based approaches. However, this comes at a cost: they are computationally expensive, since each query-document pair must be processed jointly through the network.

In practice, they are often used in re-ranking stages, where a smaller set of candidate documents is evaluated in detail rather than scoring an entire corpus.

Hybrid Model

▼

Hybrid models combine the strengths of both representation-focused and interaction-focused approaches. Instead of choosing between efficiency and effectiveness, they aim to achieve both—leveraging fast vector representations while still capturing detailed term-level interactions.

These models recognize that global semantic understanding and exact term matching are complementary. By integrating both signals, hybrid architectures often outperform systems that rely on only one strategy.

Parallel Architectures (e.g., DUET)

A classic example is DUET, which uses two neural networks in parallel. One focuses on exact term interactions between query and document, while the other learns distributed representations for semantic similarity. Their outputs are combined to produce a final ranking score, capturing both precise matches and deeper meaning.

Classification-Based Ranking (XMC)

Some hybrid approaches reformulate ranking as a classification problem, where each document is treated as a label. Instead of comparing similarities, the model predicts which documents are most relevant. Techniques like attention mechanisms help focus on the most important parts of the query when making these predictions.

Why Hybrid Works

By combining local interactions with global representations, hybrid models achieve better ranking performance than either approach alone. Modern variations also optimize efficiency by reusing precomputed representations, reducing the cost of comparing queries against large document collections.

Neural ranking models revealed the power of the transformer's attention mechanism for capturing relevance. The real paradigm shift, however, came when the field moved away from training models from scratch and began leveraging large pre-trained transformers like BERT. Instead of designing task-specific architectures on top of static embeddings, researchers started fine-tuning a single, deeply contextualized model for IR tasks. This shift redefined existing tradeoffs and led to modern approaches such as cross-encoders and bi-encoders.

4. Pre-trained transformers Models

BERT revolutionized natural language processing and information retrieval by introducing powerful contextual representations. Its success comes from multi-head attention, which captures relationships between words, and large-scale pre-training, which allows it to understand semantics and world knowledge.

Transformer models fall into three main categories:

- • Encoder-only models (like BERT): use bidirectional attention (both left and right context) and are well-suited for understanding text, making them ideal for retrieval and ranking tasks.

- • Decoder-only models (like GPT): focus on text generation using unidirectional attention, its application to IR is less direct and need architectural changes.

- • Encoder-decoder models: leverage a "sequence-to-sequence" architecture where a bidirectional encoder creates a rich input representation that guides an autoregressive decoder in generating output. In IR, they can be framed as rerankers (generating a “relevant” or “irrelevant” token), or as generative retrievers that directly generate document identifiers.

In information retrieval, encoder-based transformers are especially important. They form the backbone of modern retrieval systems, where the key challenge is balancing deep interaction between query and document with computational efficiency.

This leads to two main architectural approaches:

- • Cross-Encoder (Deep Interaction): By processing the concatenated query and document as a single sequence, this model enables deep, exhaustive interaction between every query and document token. While this approach achieves state-of-the-art ranking performance, its high computational demand restricts its practical use to the reranking stage.

- • Bi-Encoder (Separable Pre-computation): By using separate encoders to process the query and document independently, this architecture generates distinct fixed-size vectors. Because these document vectors can be pre-computed and indexed offline, the system can perform lightning-fast similarity searches, making it the ideal choice for first-stage retrieval in large-scale collections.

Modern systems often combine these ideas, using fast retrieval models to narrow candidates and more powerful interaction models to rerank results, achieving both efficiency and high-quality ranking.

Training strategies for Transformer-based IR

▼

Although model architectures and training strategies are distinct concepts, their co-evolution is a defining pattern of modern Information Retrieval. The architectural dichotomy between cross-encoders and bi-encoders deeply influences the training methodology, extending traditional loss functions into the deep learning paradigm.

Contrastive and Listwise Objectives

Contrastive learning works by teaching a model to recognize the "right" answer by comparing it against several "wrong" ones, a process rooted in a mathematical framework called InfoNCE. In the world of dense retrieval, this is most commonly used to train bi-encoders, where the system converts a query and a document into two separate vectors and measures the semantic closeness between them using a calculation like a dot product. During training, the bi-encoder is penalized whenever it fails to assign the highest probability to the truly relevant document out of a crowded field of distractors. For cross-encoders, which are more computationally intense because they analyze the query and document together as a single pair, the objective is simplified to a Negative Log-Likelihood loss to ensure the final scores clearly favor the correct candidate over the incorrect ones.

Hard Negative Mining (HNM)

A critical challenge in training bi-encoders is generating sufficiently difficult negative examples. Random sampling usually yields "easy" negatives that do not effectively challenge the model. Hard Negative Mining is essential because it exposes the model to cases where positive and negative document features are difficult to distinguish. Key strategies include:

- In-Batch Negatives (IBN): Leveraging other queries’ positive documents within the same minibatch as negative examples for the current query. This provides an excellent balance of efficiency and difficulty.

- Lexical Negatives: Using documents highly ranked by a traditional sparse model (like BM25) but not actually labeled as relevant.

- Iterative Hard Negative Mining: Periodically employing an existing dense retriever to mine difficult negatives from the collection (i.e., documents that the current model mistakenly ranks highly) to continually feed the model better training data.

Knowledge Distillation (KD)

To further narrow the effectiveness gap between efficient bi-encoders and powerful cross-encoders, the community widely adopted Knowledge Distillation. KD is a technique for training a smaller, efficient "student" model by transferring knowledge from a larger, more capable "teacher" model. In IR, the student model is trained to mimic the teacher’s output distribution over candidate texts by minimizing the Kullback-Leibler (KL) divergence between their softened probability distributions. Using a temperature hyperparameter creates a softer probability distribution, which helps transfer nuanced information. KD provides an essential bridge, allowing the efficiency of bi-encoders to approach the effectiveness ceiling set by cross-encoders.

The Cross-encoder for Reranking

▼

The most effective application of transformers in Information Retrieval involves full, deep interaction between query and document tokens. This architecture, known as a cross-encoder, takes the concatenated sequence of a query and a document as a single input. Popularized by models like monoBERT for reranking candidate passages from a first-stage retriever, it typically outputs a relevance score via a linear layer applied to the final [CLS] token's representation.

However, this cross-encoder schema faces two primary challenges: (1) fixed context length constraints (e.g., BERT's 512-token limit) which makes processing long documents difficult, and (2) limited expressive power that comes from relying on a single token’s fixed-dimensional representation (e.g., 768 dimensions for BERT).

Handling Long Documents (Chunk-and-Aggregate)

To bypass input constraints, chunk-and-aggregate approaches decompose the ranking problem. A long document is split into fixed-length passages or sentences, and a BERT-based cross-encoder scores each query-passage pair independently. These individual results are then combined to produce a final document-level score. An influential early example of this is BERT-MaxP, which simply uses the maximum passage score as the overall document score.

Two Primary Aggregation Strategies

Once a document is chunked and processed, systems must combine the data. This is typically done in one of two ways:

- Score-Pooling: This method applies simple mathematical operations—such as taking the maximum, sum, or first passage score—to combine the scalar relevance scores directly.

- Representation Aggregation: This method addresses both the length limit and the single-vector expressiveness limitation. Instead of collapsing signals into a single score, it gathers the rich, low-dimensional [CLS] representations from each passage. This creates a comprehensive document-level feature set that is processed by additional neural networks (like CNNs or transformers), as seen in notable systems like PARADE and CEDR.

The Scalability vs. Interaction Trade-off

While chunk-and-aggregate approaches successfully handle long documents, they fundamentally limit query-document interactions to the passage level. This creates an information bottleneck, where passage scores or low-dimensional representations constrain the model’s ability to capture document-wide relevance patterns. Ultimately, this leaves system designers with a defining characteristic of cross-encoders: a persistent tradeoff between computational scalability and interaction richness.

Bi-encoder

▼

While some architectures (like cross-encoders) provide high accuracy by evaluating a query and document together, they are computationally massive because they require a full neural network pass for every single pair. The Bi-encoder architecture solves this bottleneck through separate processing.

A bi-encoder uses a backbone network (typically a Transformer) to encode the query and the document independently. The massive advantage here is efficiency: your entire document collection can be pre-computed into a vector index offline. At query time, retrieval becomes a fast approximate nearest neighbor (ANN) search problem or search with an inverted index data structure, avoiding costly neural network inference.

Learned Dense Retrieval

Dense retrieval uses dual-encoders (often BERT-based) to compress text into low-dimensional dense vectors—typically around 768 dimensions.

Instead of relying on exact word matches, these dense vectors capture deep semantic meaning, effectively solving the "vocabulary mismatch" problem found in traditional search.

In dense retrieval, relevance is calculated using simple similarity functions like dot product or cosine similarity between vector representations.

By pre-computing these document vectors, systems can perform high-speed ANN searches, making it possible to scan massive datasets nearly and instantaneously.

This architecture effectively solves the "vocabulary mismatch" problem of traditional models like BM25 by focusing on semantic meaning rather than exact keyword matches, leading to

significantly better performance in complex tasks like web search and open-domain question answering.

Learned Sparse Retrieval (LSR)

Sparse retrieval uses the exact same bi-encoder foundation but produces high-dimensional sparse vectors. The number of dimensions matches the model's entire vocabulary (tens of thousands of terms), but explicit regularization forces most of the term weights to zero. Because these vectors represent context-aware word importance and use non-negative weights, they seamlessly integrate with traditional inverted indexes (like Lucene). A major perk of LSR models (like SPLADE) is that they don't require expensive GPUs for inference, making them incredibly fast on standard CPUs. This method combines the best of both worlds: the precise keyword matching of traditional "bag-of-words" models and the deep semantic intelligence of modern Transformers. By using sparse representations the system can achieve a level of accuracy that rivals dense retrieval models while still utilizing the efficiency of standard inverted indexes.

Advanced and Hybrid Models

▼

Standard bi-encoders often face a performance bottleneck because they lack term-level interaction compared to more computationally expensive cross-encoders. To bridge this gap without sacrificing too much efficiency, researchers have developed advanced models that introduce more granular representations or combine entirely different retrieval paradigms.

Multi-Vector Representations & Late Interaction

To re-introduce query-document interaction without the full cost of a cross-encoder, some models represent queries and documents using multiple vectors rather than compressing them into a single dense vector. A prominent example is ColBERT, which assigns a contextualized vector to each token. It utilizes a "late interaction" step, comparing each query vector against all document vectors via a MaxSim operator. While this allows for highly effective, fine-grained term-level interactions, it comes with a trade-off: a drastically increased index size.

Hybrid Retrieval (Merging Strengths)

Another major direction is combining the distinct advantages of different retrieval systems. The simplest yet highly effective method is Ranklist Fusion (such as Reciprocal Rank Fusion). This approach merges the final ranked lists from a sparse retriever (like BM25, which is great at exact keyword matches) and a dense retriever (which excels at semantic meaning), requiring zero architectural changes to the underlying models.

Deep Integration & Sparse-Vector Intersection

Pushing the hybrid concept further, newer models integrate these signals at a fundamental architectural level. Architectures like COIL and uniCOIL enhance traditional bag-of-words retrieval with deep semantic embeddings. Furthermore, cutting-edge models like SLIM and SPLATE operate at the intersection of Learned Sparse Retrieval (LSR) and multi-vector representations. They map contextualized token vectors into a sparse, high-dimensional lexical space before performing late interaction, outperforming standard learned sparse retrieval baselines.

Further Improvements

▼

Beyond foundational architectures and ranking strategies, ongoing research focuses on making retrieval models more robust, adaptable, and transparent. Two major areas of advancement include refining how the backbone models are trained and decoding their internal decision-making processes.

Continual Training and Adaptation

Instead of redesigning the entire retrieval architecture, a highly effective strategy is to enhance the backbone language model itself through domain adaptation and continued pre-training. Specialized techniques, such as the Inverse-Cloze Task or architectures like Condenser, help efficiently compress rich text representations into standard tokens. Additionally, modern workflows often utilize a "middle-stage" training phase. By training on large-scale unlabeled text pairs bridging the gap between initial pre-training and supervised fine-tuning, these models achieve significant performance boosts over traditional two-stage pipelines.

Interpretability and Explainability

As Information Retrieval systems increasingly rely on complex Transformers, understanding why they make certain ranking decisions is critical. Mechanistic interpretability studies reveal that neural models rely much less on exact keyword matches and instead encode deep semantic relationships. Researchers are actively bridging the gap between dense and sparse retrieval by projecting intermediate neural representations back into human-readable vocabulary. Ultimately, as these AI systems become integral to real-world applications, ensuring they remain faithful, truthful, and trustworthy is just as important as optimizing their raw accuracy.

The evolution of IR architectures centers on the critical tradeoff between interaction depth and computational cost. This has led to a diverse spectrum of models, ranging from high-performance cross-encoder rerankers to highly efficient bi-encoder retrievers. By pushing the boundaries of the "retrieve-then-rank" paradigm through dense and sparse representations, the field has transitioned from specialized scoring components toward a new era: Large Language Models capable of both understanding and generating text.

5. LLMs for IR

The rise of Large Language Models (LLMs) marks a major evolution in IR, moving beyond simple text encoders to versatile systems capable of generation, reasoning, and planning. By leveraging their massive scale and alignment with human preferences, modern LLMs are no longer just feature extractors; they are actively reshaping the field by taking on complex new roles in retrieval, reranking, and direct information generation across various transformer-based architectures.

LLM as Retriever

▼

The dramatic increase in parameter count and training data has provided Large Language Models (LLMs) with richer world knowledge and a more nuanced understanding of semantics compared to their smaller, BERT-sized predecessors. This shift directly translates to major performance improvements in retrieval tasks.

Scaling Bi-Encoders

Researchers have empirically verified that a bi-encoder retriever’s performance directly benefits from increasing the backbone language model capacity. By scaling the size of dense retrievers with open-weight models like GPT-J and Llama-2, systems achieve significantly higher accuracy. Today, common text retrieval benchmarks like BEIR and MTEB are completely dominated by both proprietary and open-source LLM-based embedding models.

Decoder-Only Adaptation

A major challenge in using modern LLMs for retrieval is their architecture. Standard decoder-only models (like Llama) are unidirectional—optimized strictly for next-token prediction. This is not ideal for creating a single summary vector for a whole text. To fix this, researchers adapt these architectures. For example, LLM2Vec enables bidirectional attention and trains the model with specific adaptive tasks. Similarly, NV-Embed introduces a new latent attention mechanism to produce highly improved text representations.

Unified Modeling

The current frontier of research looks at bridging the gap between searching and generating. Models like GritLM take models from the Mistral family and fine-tune them simultaneously for both dense retrieval and text generation tasks using varying attention mechanisms. This demonstrates the powerful potential of unifying retrieval and generation seamlessly within a single foundation model.

LLM as Reranker

▼

Large Language Models (LLMs) have significantly advanced the reranking phase of search pipelines, introducing deep reasoning capabilities that surpass traditional cross-encoder architectures. This evolution is primarily driven by two architectural approaches: Fine-Tuning and Zero-Shot Prompting.

Fine-Tuned LLM Rerankers

Researchers have successfully adapted large encoder-decoder models (like T5) and decoder-only models (like Llama) to act as highly accurate rerankers. The training strategies mirror traditional ranking paradigms:

- Pointwise & Pairwise: Models are fine-tuned to output binary relevance decisions or numerical scores, utilizing established ranking losses like RankNet.

- Listwise Processing: Instead of scoring documents individually, architectures like ListT5 use a Fusion-in-Decoder approach to process and rank an entire list of candidates in a single forward pass. Similarly, models like QwQ-32B concatenate multiple passages, leveraging their strong reasoning capabilities to achieve highly efficient listwise reranking.

Long-Context Capabilities

The expanded context windows of modern LLMs have enabled a new paradigm for handling extensive text. Models such as RankLlama take advantage of massive context limits (e.g., truncating at 4,096 tokens) to perform superior pointwise reranking on lengthy documents, easily outperforming older BERT-based systems that were heavily constrained by token limits.

Zero-Shot & Few-Shot Prompting

Thanks to the advanced instruction-following abilities of modern LLMs (like GPT-4), highly effective listwise reranking can now be achieved without any task-specific fine-tuning. The model is simply prompted with a query and a list of candidate documents. To mitigate the high computational costs and context limits of processing full documents, cutting-edge research has even proposed training specialized rerankers that operate purely on compact passage embeddings.

Generative Retrieval

▼

Generative Retrieval represents a radical architectural shift enabled by Large Language Models (LLMs). While traditional systems follow a "retrieve-then-rerank" paradigm, generative retrieval fundamentally challenges this by reframing search as a sequence-to-sequence task. Instead of searching through an external index, an autoregressive language model is trained to directly generate the unique Document Identifiers (DocIDs) of relevant documents in response to a query.

The Evolution: Differentiable Search Index (DSI)

Building on foundational entity linking work like GENRE, the Differentiable Search Index (DSI) introduced generative retrieval to broader document search. Its core innovation is parameterizing the entire retrieval pipeline within a single neural model. All corpus information is encoded directly in the model parameters rather than external indices. DSI operates through two fundamental sequence-to-sequence tasks: Learn to Index and Learn to Retrieve.

Taxonomy of Document Identifiers

A critical design choice in generative retrieval is how documents are represented as targets for the model to generate:

- Atomic & String Identifiers: Early approaches explored using unique integers, titles, or URLs, generating them either through classification layers or autoregressively as string sequences.

- Semantic Identifiers: Proven to be the most effective, these are semantically structured identifiers created through clustering algorithms. This allows the model to associate document content with hierarchical, meaningful IDs.

Inference Constraints & Current Challenges

To ensure the model only outputs valid DocIDs during inference, systems (often using a T5 backbone) apply constrained beam search over a prefix tree (trie) constructed from all valid identifiers. Despite its innovation, generative retrieval struggles in dynamic environments. Updating the corpus usually requires computationally expensive full model retraining. Furthermore, scalability remains a major hurdle, as memory and computational needs grow substantially with larger document collections.

Large Language Models (LLMs) are transforming the broader Information Retrieval ecosystem far beyond simple architectural updates. They are now being utilized to automate the creation of high-quality synthetic training data—such as queries and "hard negative" passages—which significantly reduces the high costs traditionally associated with manual data labeling.

Beyond data synthesis, LLMs are increasingly serving as sophisticated proxies for human relevance judges, allowing for much faster and more scalable evaluation cycles. While their generative and instruction-following traits enable entirely new paradigms like zero-shot listwise reranking, the evolution of these architectures remains heavily dictated by the need to balance superior semantic matching with rigorous real-world efficiency and reliability.

6. Architectures for Diverse Scenarios

Multilingual Data

▼

Handling multiple languages introduces unique architectural challenges in search systems. While often used interchangeably, Multilingual Information Retrieval (MLIR) and Cross-Lingual Information Retrieval (CLIR) represent distinct scenarios. CLIR occurs when a user issues a query in a source language to retrieve documents in a different target language, requiring explicit cross-language alignment at query time. MLIR is a broader paradigm where a system indexes and searches over a collection containing multiple languages, emphasizing shared representations. Architecturally, CLIR can be viewed as a specialized case of MLIR.

Early Approaches: Translation & Statistics

Early systems framed cross-language retrieval as a synonymy problem, relying on static bilingual thesauri to map query terms into a shared conceptual space. Later, the availability of large parallel corpora in the 1990s enabled statistical models like Cross-Language Latent Semantic Indexing (CL-LSI) and Statistical Machine Translation (SMT). Architecturally, these early systems isolated linguistic complexity into a separate translation or pre-processing component, leaving the underlying retrieval engine entirely monolingual.

The Shift to Multilingual Transformers

The introduction of multilingual pre-trained transformers (such as mBERT and XLM-R) marked a fundamental architectural shift. Instead of relying on explicit multi-stage translation pipelines, these models use a single encoder to represent queries and documents across languages through shared semantic representation learning. This end-to-end approach substantially reduces the dependence on parallel data by matching meaning directly across languages.

Modern Enhancements: Adapters & Alignment

Because general-purpose multilingual encoders can sometimes lack sufficient cross-lingual alignment from pretraining, researchers introduced targeted improvements. Per-Language Modules add language-specific adapters or sparse fine-tuning masks to improve performance efficiently, proving especially useful for low-resource languages. Meanwhile, Alignment-Focused Models (like LaREQA, InfoXLM, and LaBSE) use parallel corpora and contrastive learning objectives to enforce tighter semantic alignment between cross-lingual pairs without needing to alter the underlying transformer structure.

Multimodal Data

▼

Multimodal retrieval requires a system to learn semantic correspondences across different types of data—such as images, video, and text—rather than treating them independently. The architecture of these systems has evolved significantly, driven by a continuous tension between interaction richness (how deeply the modalities understand each other) and computational efficiency.

1. From Shallow Fusion to Deep Embeddings

Early multimodal architectures relied on manual feature extraction (like bag-of-words for text and SIFT for images) followed by "shallow fusion" to project them into a shared space. The deep learning era replaced this manual engineering with end-to-end representation learning. Models began using joint embeddings and dependency trees to align global concepts and fine-grained fragments (like specific regions of an image matching specific words in a sentence).

2. The Dual-Encoder Revolution (CLIP & ALIGN)

The availability of massive vision-language datasets sparked a paradigm shift toward bi-encoder architectures trained with contrastive learning. Models like CLIP independently encode images and text, making them highly scalable and efficient for indexing billions of pairs. To capture finer details without losing this efficiency, researchers introduced late-interaction architectures (similar to ColBERT), which use multi-vector representations to enable token-level matching across modalities.

3. Rich Fusion & Unified Architectures

When tasks require complex reasoning (such as video-text retrieval), models move beyond bi-encoders to use deep cross-modal attention and hierarchical fusion. Because this full cross-attention is computationally expensive, it is often restricted to reranking a smaller set of candidates. Today, the cutting edge relies on Multimodal Large Language Models (MLLMs), which unify retrieval, deep reasoning, and generation within a single, flexible system without collapsing the underlying structure of the modalities.

Performance-Efficiency Trade-off

▼

Every retrieval system faces a fundamental tug-of-war between effectiveness (accuracy metrics like recall, MRR, or nDCG) and resource efficiency (latency, throughput, memory footprint, and indexing costs). The architectural choices you make—how documents are represented and scored—dictate exactly where your system lands on this tradeoff curve.

Single-Vector Models (The Speed Kings)

A highly common design for strict latency budgets is the bi-encoder architecture. Queries and documents are encoded entirely independently into single, compact vectors. Paired with highly optimized Approximate Nearest-Neighbor (ANN) indexes like HNSW, these models deliver millisecond-scale responses across massive datasets. However, squeezing an entire document into one vector means you might miss fine-grained, token-level matches.

Multi-Vector & Sparse Models (The Quality Champions)

To capture those missed nuances, researchers use richer interaction patterns. Late-interaction models (like ColBERT or ColPali) maintain token-granular signals, while learned sparse models (like SPLADE) produce high-dimensional representations that recover exact lexical matches. These models significantly boost search effectiveness, but they demand a higher computational budget, increased latency, and much larger index sizes.

Staged Retrieval (The Pragmatic Balance)

In production environments, the most pragmatic solution is often a multi-stage pipeline. A fast, inexpensive first stage (using BM25 or a single-vector ANN) casts a wide net to retrieve a small set of candidates. Then, a computationally expensive cross-encoder or interaction-heavy model reranks only those top results. This cascade achieves the high accuracy of complex models while preserving the throughput of simple ones.

Architectural Design Heuristics

- For low latency & low cost: Adopt a single-vector dual-encoder with efficient ANN search.

- For top-tier quality: Favor multi-vector or interaction-intensive models if your compute budget allows.

- For the best of both: Build a two-stage pipeline (coarse retrieval followed by a specialized reranker) and utilize index compression techniques to manage scale.

7. Directions and Challenges

Improve Fundamental IR Components

▼

At the heart of any Information Retrieval system are the models that extract features and estimate relevance. As the field moves toward more compute-intensive practices, scaling neural networks has proven successful. However, doing so sustainably requires major advancements in foundation model efficiency and relevance estimation.

Powerful & Efficient Foundation Models

Current transformer-based IR models demand vast amounts of training data and compute. To make them practical for real-world and real-time applications (like conversational search), researchers are focusing on several optimization fronts:

- Data & Training Efficiency: Developing architectures capable of zero-shot or few-shot learning, supported by low-precision training and parallel processing to reduce costs.

- Inference Optimization: Advanced compression techniques to rapidly handle variable-length queries in agent-based systems.

- Lite Foundation Models: Exploring compact models—such as wide-and-shallow versus deep-and-narrow BERT architectures, or Mixture-of-Experts—to maintain high performance with lower overhead.

Exploring Transformer Alternatives

While transformers dominate modern IR, the quadratic computational complexity of their attention mechanisms remains a severe bottleneck. The industry is actively exploring alternatives with theoretical linear complexity, such as Linear RNNs, State Space Models (SSMs), and Linear Attention. While current gains are still limited compared to highly optimized transformers, cracking the code on these architectures could revolutionize large-scale IR.

Flexible & Scalable Relevance Estimators

There is a continuous trade-off between accuracy and speed. Cross-encoders offer complex, highly accurate relevance estimation but are computationally expensive. Conversely, Bi-encoders are extremely fast (using inverted indexing or nearest neighbor search) but rely on simple linear similarity functions. To bridge this gap, modern approaches like ColBERT utilize late-interaction (MaxSim operations), while cutting-edge research explores Hypernetworks that dynamically generate query-specific neural networks to balance complex matching with scalable retrieval.

Retrieval for AI

▼

A major paradigm shift is occurring in Information Retrieval: the primary "user" of search systems is transitioning from humans to Artificial Intelligence models. Rather than just returning links for a person to read, modern IR increasingly serves Large Language Models (LLMs) for complex tasks like text generation, tool usage, reasoning, and planning. This fundamental change forces us to rethink how search systems are built and evaluated.

Redefining Relevance and Metrics

When the end-user is an ML model, the definition of relevance changes drastically. A document that appears disjointed or irrelevant to a human might contain the exact factual "nugget" an LLM needs to synthesize an answer. Furthermore, traditional human-centric ranking metrics often fail to align with how well an AI performs on downstream tasks. Moving forward, IR models must prioritize flexible, end-to-end system optimization over merely achieving high scores on traditional ranking leaderboards.

The Rise of Autonomous Search Agents

Resolving long-tail knowledge requests requires models capable of complex instruction following. This has given rise to Deep Research Agents—AI systems powered by large reasoning models (LRMs) that dynamically plan and iteratively retrieve external data. These agents typically access information in two ways:

- • API-Based Search: Fast, efficient, and structured interactions with search engine or database APIs. It scales well but relies on traditional IR constraints.

- • Browser-Based Search: Simulates real-time, human-like browsing on dynamic web pages. It is highly capable but introduces latency, high token consumption, and environmental complexity.

Open Challenges

While training LLMs to use search tools via reinforcement learning shows immense promise, significant hurdles remain. Currently, imbuing a retriever with strong reasoning capabilities requires massive backbone models (e.g., 7B or 32B parameters), which are often too heavy or expensive for production environments. The next great frontier in IR research is figuring out how to build lightweight, scalable retrievers with advanced reasoning skills, and how to jointly optimize the retriever and the language model in a single, unified framework.

RAG (Retrieval-Augmented Generation)

▼

Retrieval-Augmented Generation (RAG) refers to architectures that combine an external retrieval module with a generative model, enabling the system to access and condition on external knowledge before producing output. Early formulations deliberately separate retrieval and generation as distinct components. This architectural decoupling allows retrieval to be optimized independently from generation, maximizing modularity and scalability while drastically improving factual accuracy and reducing hallucinations.

A typical RAG pipeline is built upon four fundamental components:

- Corpus Embedding & Indexing: Utilizing vector stores or sparse indexes to ensure fast, scalable retrieval.

- Retriever Model: Using dense vectors or hybrid techniques to return the top-k most relevant items.

- Fusion Mechanism: Combining the retrieved content with the user's query (e.g., via text concatenation or cross-attention) before passing it forward.

- Generator: Typically an LLM that produces the final answer grounded strictly on the fused context.

Key Engineering & Modeling Considerations

Retriever-Generator Coupling

The architectural choice of where and how to combine retrieval results deeply affects quality and efficiency. Early approaches rely on the simple concatenation of text passages directly into the generator’s context window, requiring no structural changes to the LLM. However, more advanced designs utilize dedicated cross-attention layers or rerankers to improve precision and integration.

Multi-Modal & Structured Retrieval (GraphRAG)

Recent work extends RAG far beyond plain text. Frameworks like GraphRAG and related graph-enhanced pipelines integrate structured knowledge sources, enabling the retrieval of complex relational paths that are difficult to capture by standard vector similarity alone. These architectures introduce specialized components—like graph encoders and reasoning layers—allowing the system to extract multi-hop or entity-centric context before generation. While highly effective for structured reasoning, they do come at the cost of larger indexes and added pipeline complexity.

Adaptive Retrieval

Newer RAG pipelines weave advanced reasoning capabilities directly into the generator to dynamically decide whether to retrieve external information or generate the answer internally. Strong retrieval reduces generation errors, but adaptive retrieval strategies require careful task planning and retrieval coordination to ensure latency and pipeline complexity remain balanced.

Overall, RAG architectures represent a massive spectrum—from simple retrieval-plus-generation pipelines to highly complex, multi-component systems that integrate heterogeneous knowledge representations and adaptive retrieval planning to maximize both relevance and reasoning capabilities.

The journey of Information Retrieval architectures is a story of escalating semantic depth. The field has systematically evolved from the foundational principles of term-based matching to the statistical power of Learning-to-Rank, and finally to the deep semantic understanding of pre-trained transformers and Large Language Models (LLMs). Throughout this evolution, a core architectural tension has persisted: the fundamental tradeoff between effectiveness and efficiency.

Today, we stand at a critical inflection point. The primary "user" of search is shifting from a human at a search bar to an AI model within a larger system. IR is no longer just a tool for finding documents; it is becoming a foundational cognitive component for Retrieval-Augmented Generation (RAG), autonomous agents, and complex reasoning frameworks. Relevance now means providing the precise factual context an AI needs to complete a downstream task.

Looking ahead, the grand challenges lie in building foundation models that are multimodal, multilingual, and computationally sustainable. As IR becomes ever more deeply embedded in the fabric of artificial intelligence, its continued evolution will be crucial—not just for the future of search, but for the future of intelligent systems themselves.